Robots -They are any type of “bot” that visits websites on the internet. These bots “crawl” across the web to help search engines like Google index and rank the billions of pages on the internet, one such common example of these bots are search engine crawlers.

Robots.txt – It is the practical implementation of that standard – where it allows you to control the interaction of participating bots with your site. These bots can be entirely restricted to access certain areas of your site, according to your wish and more.

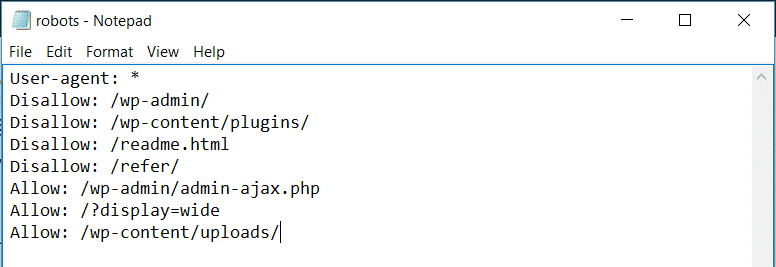

Here is an example of WordPress Robot.txt file

What does an ideal robots.txt file look like?

An ideal robot.txt file usually names a user agent in the first line, where the user agent is the name of the search both that you are trying to communicate with. You can use asterisk * to instruct all the bots. Examples of such bots are – Googlebot or Bingbot.

In the next line, you can see the instructions Allow or Disallow for search engines, so they get to know which parts you want them to index, and which ones you don’t want to be indexed.

How to submit the robot.txt file to Google?

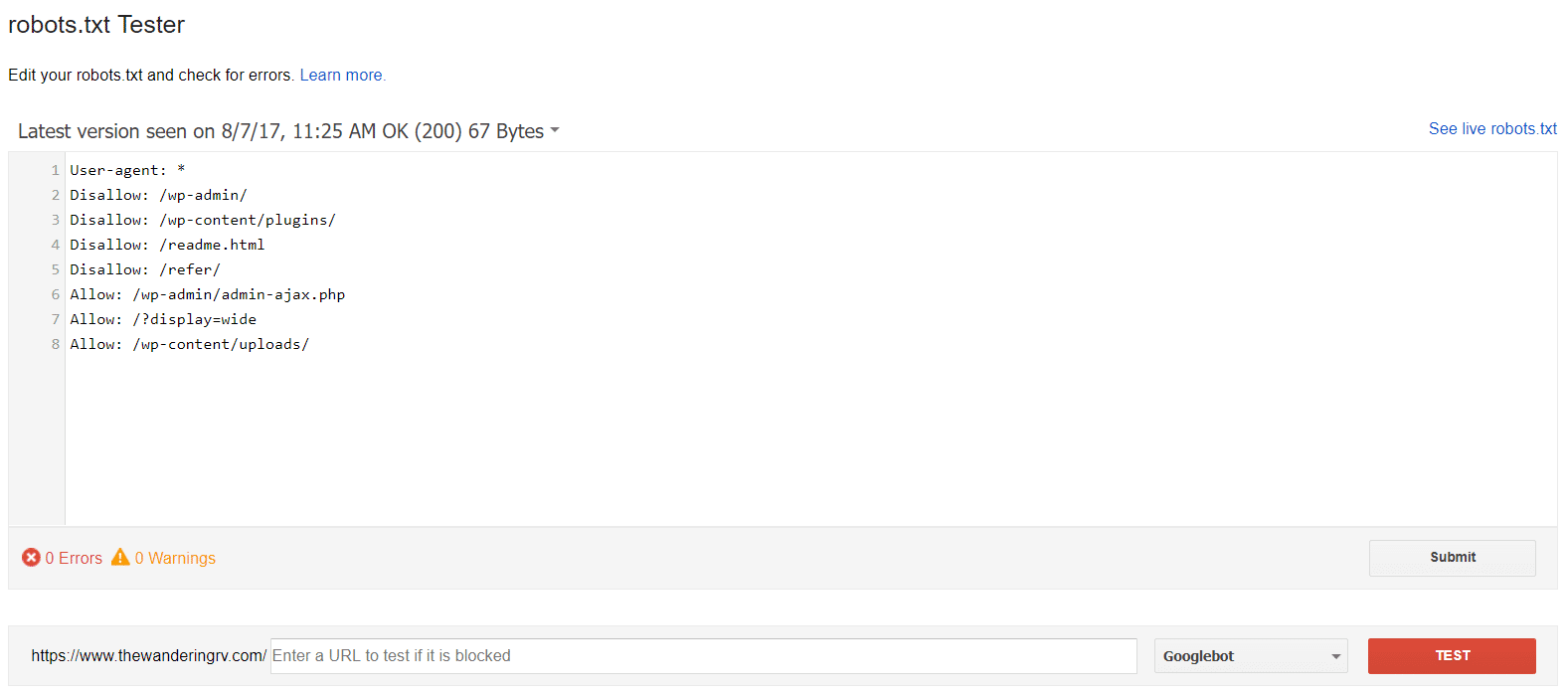

Once you are done you can submit it to google using google search console.

You may test it using Google’s robot.txt tool

What To Put In Your Robots.txt File?

Robots.txt lets you control the interaction of the robot with your site. You do that with two core commands:

- User-agent – this lets you target specific bots. User agents are what bots use to identify themselves. With them, you could, for example, create a rule that applies to Bing, but not to Google.

- Disallow – this lets you tell robots not to access certain areas of your site.

How To Use Robots.txt to Block Access to Your Entire Site:

If you want to block all crawler access to your site. This is unlikely to occur on a live site, but it does come in handy for a development site. To do that, you would add this code to your WordPress robots.txt file:

User-agent: *

Disallow: /

The *asterisk pointing next to User-agent means “all user agents”. The asterisk is a wildcard, that means it is applied to every single user agent. The /slash pointing next to Disallow says you want to disallow access to all pages that contain “yourdomain.com/” (which is every single page on your site).